I was initially drawn to Sheffield to work with Yorick Wilks - someone

I had admired since my PhD days and working with LDOCE. I worked

on the proposal for Companions (and it's unsuccessful predecessor

Copain) and spend a few months working for Yorick before deciding

that it was all too hard.

I did however do some preliminary work with him as part of the Humaine NoE.

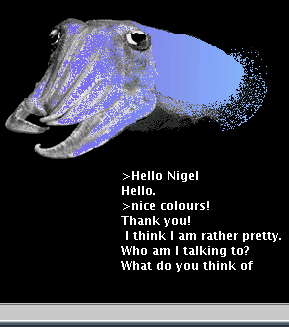

Nigel the Cuttlefish is a chatbot that uses colour to express

emotion. He uses the Belief, Desire and Intention agent architecture

to balance reactive behaviour (answering questions, greetings etc) with

deliberative behaviour (planning to get compliments for example).

Nigel uses Information extraction techniques to understand the user's

utterances, and templates to generate text - he is not that sophisticated,

but using BDI as the dialogue manager means he is more believable than many

chatbots. (see the discussion of believability here).

I was initially drawn to Sheffield to work with Yorick Wilks - someone

I had admired since my PhD days and working with LDOCE. I worked

on the proposal for Companions (and it's unsuccessful predecessor

Copain) and spend a few months working for Yorick before deciding

that it was all too hard.

I did however do some preliminary work with him as part of the Humaine NoE.

Nigel the Cuttlefish is a chatbot that uses colour to express

emotion. He uses the Belief, Desire and Intention agent architecture

to balance reactive behaviour (answering questions, greetings etc) with

deliberative behaviour (planning to get compliments for example).

Nigel uses Information extraction techniques to understand the user's

utterances, and templates to generate text - he is not that sophisticated,

but using BDI as the dialogue manager means he is more believable than many

chatbots. (see the discussion of believability here).

|

The key thing to come out of the CA-EM literature for me was however Paul Seedhouse's overview of lessons learned from CA, and his overview provides an explanation for why people swear at chatbots. The blury picture to the right is taken from a movie of dog attacking an AIBO. The critical point for me is that the dog warns the robot twice before throwing it across the room (the yelling is apparently the researchers worried about their AIBO - it is okay by the way if you were worried to). Why does the dog warn, and how is the AIBO meant to know that it is being warned? The hypothesis is that the dog is using a species specific hard-wired behavioural norm to socialize the AIBO. If the AIBO had been a puppy, the puppy would be hard-wired to understand the significance of the bared teeth and the growl, and would learn to not interrupt a dominant dog's meal.

Are there human equivalents of these species specific hard-wired behavioural norms? Yep. Seedhouse summarises the last 50 years of CA research with the high level observation that an utterance in a conversation will either go seen but unnoticed - people answer your questions and greet when greeted, or noticed and accounted for - you can figure out why he or she said what they said, or risks sanction. When people abuse chatbots, the chatbot is being sanctioned. Like the Dog in the move, we humans are hard-wired to socialize the socially inept.